Neural Flow 3202560223 Apex Node

The Neural Flow 3202560223 Apex Node is presented as a modular controller for edge-to-cloud orchestration. It claims to tier computation, route data selectively, and compress models to speed up inference. Skepticism is warranted: gains depend on workload, network conditions, and implementation detail. The premise invites scrutiny of latency, accuracy, and integration burden. What concrete results exist, and how do they scale across diverse environments? The discussion centers on practical feasibility and repeatable measurements, not promises.

What Is the Neural Flow 3202560223 Apex Node?

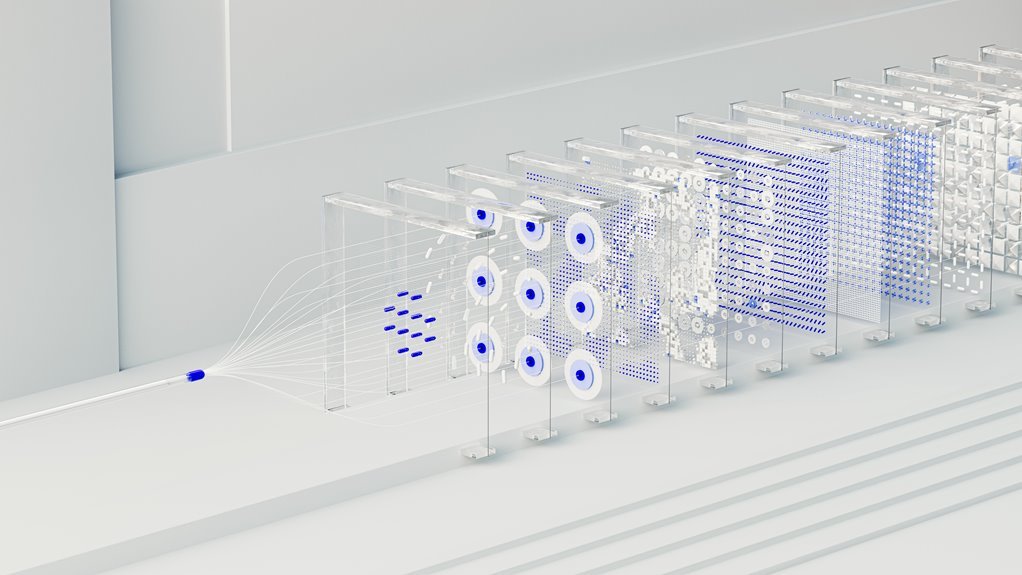

The Neural Flow 3202560223 Apex Node is a conceptual component described as part of a broader neural architecture. It presents a framework for neural flow orchestration, yet remains speculative. The apex node suggests modular control, while edge acceleration and cloud inference implicate distributed processing. Skeptically framed, the concept invites scrutiny of performance claims and autonomy without surrendering freedom.

How the Apex Node Accelerates Inference Across Edge and Cloud

How does the Apex Node accelerate inference across edge and cloud by coordinating localized processing with centralized inference? It skeptically evaluates architecture, showing deliberate latency optimization through tiered computation and selective data routing. The design emphasizes model compression, reducing size without sacrificing accuracy, enabling faster handoffs between devices and cloud. Freedom-minded readers should note tradeoffs and measurable efficiency rather than hype.

Real-World Workloads: Use Cases and Performance Wins

Real-world workloads demonstrate how the Apex Node translates architectural theory into measurable gains, balancing edge-local processing with centralized inference to match practical demands.

The analysis remains skeptical about universal claims, highlighting edge optimization as a nuanced benefit, not a certainty.

Latency reduction appears situational, while edge cloud collaboration shows promise.

Model compression aids efficiency, though implementation details determine sustained performance gains.

Freedom-oriented readers deserve rigorous assessment.

Getting Started: Integration, Tuning, and Best Practices

Practical integration with the Apex Node requires a disciplined approach: define data boundaries, establish clear latency targets, and map workflows to edge-local processing or centralized inference as appropriate. The guidance emphasizes integration tuning and disciplined deployment, wary of overfitting configurations. Skeptical evaluation reveals that best practices optimization hinges on measurable gains, iterative refinement, and verifiable, repeatable performance across diverse workloads for freedom-minded teams.

Conclusion

The Apex Node promises a neatly tiered world where edge and cloud dance in perfect harmony, delivering faster inferences with a wave of compression. Yet the sheen reveals a cautionary sting: gains hinge on deployment discipline, data routing discipline, and stubborn real-world variance. Analysts should weigh latency deltas against integration costs, and skepticism remains warranted about every claimed velocity miracle—until independent benchmarks prove the performance is more than a clever orchestration of promises. Irony, as ever, lurks in efficiency’s shadows.